Documentation Index

Fetch the complete documentation index at: https://honeydew.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

Workflow

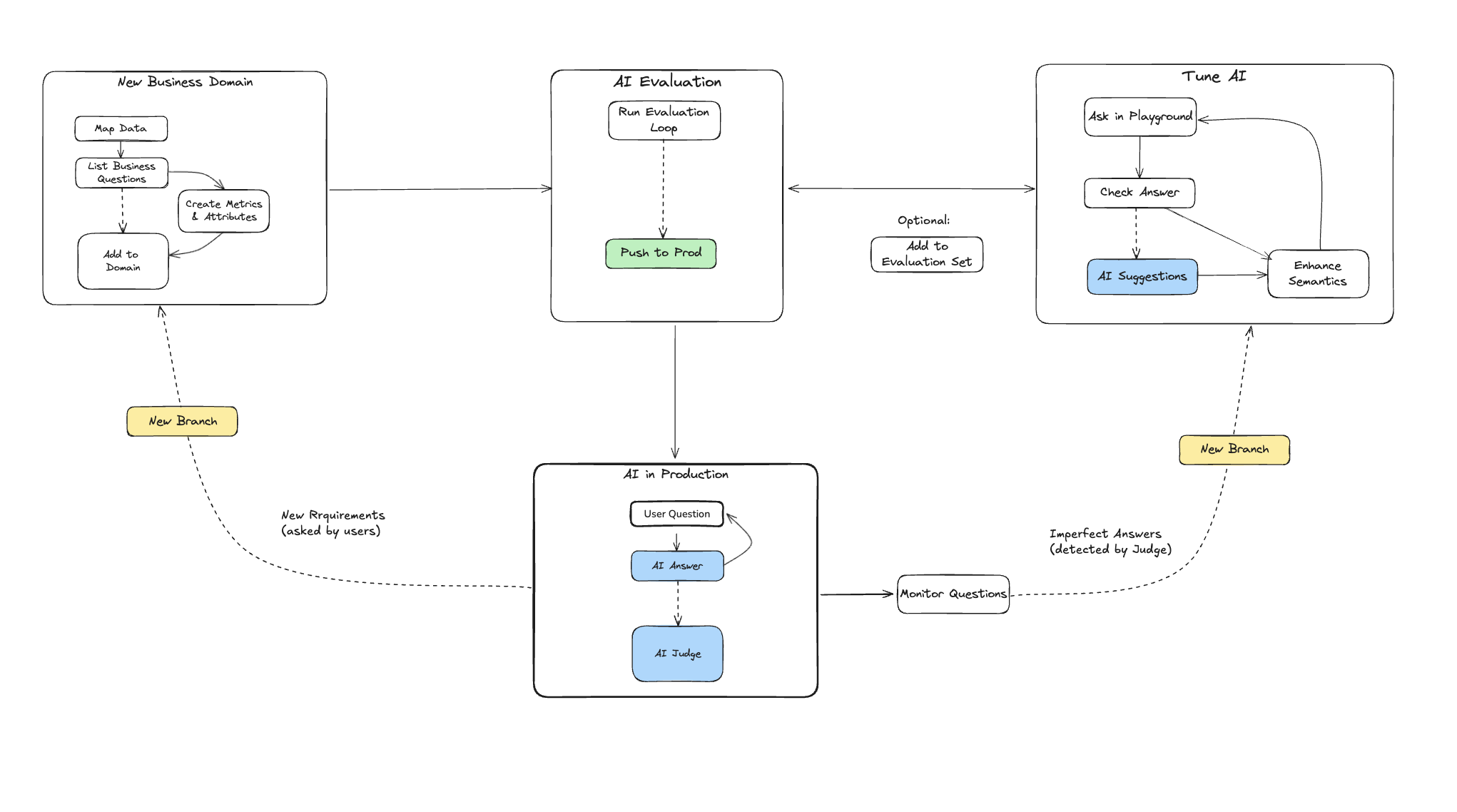

The workflow for Honeydew AI helps data teams ensure the stability and improve the quality of AI responses, in view of user demands. Data teams using Honeydew to build production-grade AI systems typically follow three key approaches:- Metadata: Define AI-specific metadata to clarify intent and resolve ambiguities or inconsistencies in data.

- Evaluation: Regularly validate AI outputs by testing them against a curated set of reference questions.

- Monitoring: Continuously track new AI responses to gauge overall quality and identify specific modeling issues.

Agents

Honeydew AI operates through agents, within a workspace and a branch.You can create multiple agents within the same workspace, each

targeting different analytical needs or user groups.

Setting up a new business domain

Setting up any new business domain will include:- Set up Semantic Layer Definitions: Map data sources to a semantic layer; create relevant metrics or entities.

- Create Context: Organizational knowledge, analytical skills, and historical events that guide the AI.

- Create an Agent: Define which domain and context items the AI can access.

- Create AI Metadata: Field-level descriptions, synonyms, and hints.

- Test Business Questions: A set of business questions to evaluate the AI Analyst.

Maintaining an active business domain

The more frequently a domain is used, the more critical it becomes to actively monitor its quality and refine the semantic layer as necessary. Activities on an active domain will include:- Monitor AI Responses to improve the semantic model when there are gaps.

- Add New Business Questions to the evaluation set to cover new business demands.

- Keep testing Known Business Questions as things change to avoid regression.